82% of Agent Failures Start Before the First Line of Code

The AI coding agent failure rate across enterprise engineering teams points to a consistent and surprising finding: 82% of task failures are traceable to the planning phase, not execution. The agent didn’t hallucinate. The model wasn’t underpowered. The failure was baked in before a single line of code was generated.

This is the root cause of the AI productivity paradox — and until teams address it, more powerful models won’t fix the problem.

The Number That Should Change How You Deploy AI

Let’s unpack what 82% actually means in practice.

When a coding agent fails at a complex task — wrong implementation, broken architecture, missed requirements, incomplete output — the cause is almost never the model’s capability ceiling. Research from enterprise AI adoption studies consistently shows the failure originates in one of three pre-execution conditions:

1. Insufficient context. The agent was given a task description, not a plan. It had no visibility into the existing architecture, adjacent systems, or implicit constraints. It made reasonable assumptions that turned out to be wrong.

2. Ambiguous scope. The task boundaries weren’t defined clearly enough for the agent to know when it was done. “Refactor the authentication module” means something different to every person who wrote the original code.

3. Missing acceptance criteria. Nobody specified what a successful outcome looks like. So the agent optimized for something measurable — like “it compiles” — rather than what the team actually needed.

None of these are execution problems. They’re planning problems. And they’re entirely preventable.

Why Engineering Teams Keep Repeating the Same Failure

Here’s what’s counterintuitive: teams know planning matters. Ask any senior developer whether you should spec out a task before handing it to an agent, and they’ll tell you yes, obviously.

But then watch what happens in practice.

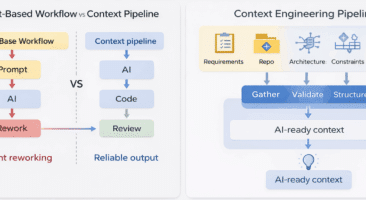

A developer needs to move fast. The task feels well-understood. They’ve done similar things before. So they prompt the agent directly — a paragraph of context, a brief description of what they want — and hope the model is smart enough to fill in the gaps.

Sometimes it works. More often, it doesn’t, and the developer spends the next two hours debugging output they didn’t expect, re-prompting with corrections, and ultimately rewriting significant portions by hand. According to independent research on developer AI tool usage, 60–70% of AI-generated code requires significant revision before it’s usable in production.

The cruel irony: developers using AI coding agents are working harder on rework, even as they perceive themselves as working faster. This isn’t a perception problem — it’s a measurement problem. Teams are measuring the speed of generation, not the quality of output.

The Compounding Cost of Pre-Execution Failure

A single failed agent task is annoying. At scale, it’s a budget and velocity crisis.

Consider the true cost of an agent task that requires three rounds of significant correction:

- Prompt → Review → Correct → Re-prompt cycles average 45–90 minutes per complex task, even with an experienced developer

- Each correction round requires the developer to re-establish context for themselves, for the agent, and often for their team

- Failed tasks that reach code review create downstream costs for reviewers who now need to understand what went wrong

- Rejected PRs reset the entire cycle

The Faros AI 2024 research placed developer time lost to context re-provision and agent iteration at 7+ hours per week per developer. For a 50-person engineering organization, that’s 350 hours of recovered capacity waiting on the table — not from better agents, but from better planning.

This is why the ROI picture for AI coding tools is so murky. Only 54% of organizations report clear ROI from their coding agent investments, despite near-universal adoption. The tools aren’t underperforming. The workflow surrounding them is.

What Pre-Execution Planning Actually Changes

Structured planning before agent execution doesn’t mean slowing down. It means front-loading the cognitive work that will happen anyway — either before the agent runs, or during the debugging cycle after it fails.

When teams implement a planning layer before handing tasks to coding agents, the data shows significant, measurable shifts:

Task first-attempt accuracy improves dramatically. Without structured planning, complex agent tasks succeed on the first attempt roughly 23% of the time. With a structured planning document — context, scope, acceptance criteria, edge cases — that number moves to 61%. The task doesn’t change. The agent doesn’t change. Only the quality of the input changes.

Iteration cycles shorten. Even when tasks require revision, agents working from structured plans require fewer correction rounds. The agent has the context to self-correct against explicit criteria rather than guessing at intent.

Review becomes faster. Code reviewers can evaluate output against a documented plan rather than reverse-engineering the developer’s original intention. This alone eliminates a significant source of review cycle friction.

Context doesn’t disappear between sessions. One of the most underappreciated costs of AI coding work is context re-provision — the work of reconstructing the understanding that existed at the start of a session when an agent loses context, a developer picks up a task the next morning, or a new team member joins a thread. Structured plans are persistent artifacts. They don’t disappear when the conversation window closes.

The Team-Level Problem That Individual Plans Don’t Solve

Here’s where the planning challenge becomes structural rather than individual.

A single developer can build a habit of planning before prompting. They can maintain their own planning documents, their own acceptance criteria, their own context artifacts. This helps their personal success rate significantly.

But engineering teams aren’t collections of isolated individuals working on isolated tasks. Tasks have dependencies. Multiple developers work in the same codebase. Senior engineers make architecture decisions that junior developers and agents need to execute against.

When planning is informal and individual, this coordination breaks down:

- Agent tasks are executed against local understanding that isn’t visible to the rest of the team

- Conflicting implementations emerge because two developers planned the same shared component differently

- Review cycles get longer because the plan exists only in the developer’s head

- When the developer is out, the context is gone

The failure mode at the team level isn’t the 82% pre-execution problem. It’s the invisibility of planning decisions across the organization — and the inability to verify that what was built matches what was planned, days or weeks after the planning conversation happened.

This is the coordination gap that individual planning habits can’t fix.

What This Means for Engineering Leaders

If you’re an Engineering Manager or CTO evaluating your AI coding investment, the data suggests a diagnostic reframe:

Don’t ask: “Are our agents good enough?”

Ask: “Are we giving our agents what they need to succeed?”

The agent capability gap is largely a solved problem at this point. The models available in 2026 — Claude, GPT-4 series, Gemini — can handle remarkable complexity when given the right context. The limiting factor is almost never model intelligence. It’s task preparation.

Don’t ask: “How do we reduce the time it takes to generate code?”

Ask: “How do we increase the percentage of generated code that ships?”

Velocity metrics measured at the generation stage are misleading. A developer who spends 20 minutes planning and 10 minutes reviewing clean agent output is dramatically more productive than a developer who spends 2 minutes prompting and 90 minutes debugging. But the first 20 minutes looks like “slow” in most productivity dashboards.

Don’t ask: “What AI tool should we adopt next?”

Ask: “What planning infrastructure should we build around the tools we have?”

The teams getting real ROI from coding agents aren’t using different agents. They’re using the same agents with structured planning workflows — and they’re verifying that what the agent built matches what was planned. That verification loop is what closes the cycle.

The Verification Gap: Where the 18% Lives

We’ve focused on the 82% of failures that originate in planning. What about the 18% that fail during execution — where the plan was sound but the output wasn’t?

This is the verification problem, and it’s distinct from the planning problem but equally important.

Without a structured plan as a verification artifact, how does a developer (or a reviewer) evaluate whether the agent succeeded? They read the code. They run the tests. They use their own judgment.

This works reasonably well for simple tasks. For complex implementations — multi-file changes, architectural modifications, integration work — human verification against unstructured memory is unreliable and slow.

When teams have structured plans, verification becomes comparison rather than judgment. Did the agent implement what we planned? Does this output satisfy the acceptance criteria we defined before the agent ran? These are answerable questions. “Did the agent do a good job?” is not.

The verification layer is what turns a planning document from a time investment into a compounding asset. Plan once. Execute. Verify against the plan. Every time.

Getting Started: Three Changes That Move the Number

If 82% of your agent failures are preventable through better planning, here’s where to start:

1. Make planning a team practice, not a personal habit. Individual developers who plan well improve their own success rates. But the coordination benefits require shared planning artifacts that the whole team can see, contribute to, and verify against. Move planning out of personal notes and into shared workflows.

2. Define acceptance criteria before agent execution, not after. The most valuable planning element is the one teams skip most often. “What does a successful outcome look like?” is the question that eliminates the most ambiguous failures. Get specific. Include edge cases. Make the definition visible.

3. Close the loop with structured verification. After an agent task completes, evaluate the output against the plan — not against general intuition. Did the agent do what the plan said? Where it deviated, was the deviation an improvement or an error? This feedback loop teaches the team, not just the agent.

These aren’t new concepts. They’re standard software engineering practices applied to the AI-assisted development workflow. The reason teams skip them with coding agents is that agents feel like they should be able to figure it out — and sometimes they do. The 82% is the reminder that “sometimes” isn’t a workflow.

The Actual Competitive Advantage

In 2025, adopting AI coding tools gave teams an edge. In 2026, nearly every engineering team has them.

The teams pulling ahead now aren’t the ones with access to better models. They’re the ones who figured out that the model is the easiest part of the problem. The hard part — and the competitive advantage — is the planning and verification layer that makes the model reliable at scale.

82% of agent failures start before the first line of code. That means 82% of the improvement available to your team is waiting not in the agent, but in what happens before you prompt it.

Brunel Agent is the planning and verification layer your AI coding tools are missing. Built for engineering teams who want structured planning, collaborative context, and a closed-loop verification workflow that works with any coding agent — Cursor, Claude Code, GitHub Copilot, or your own.

Sources: Faros AI 2024 Developer AI Adoption Report; METR AI Task Performance Research; Internal analysis of enterprise coding agent deployment patterns.