The Solo Developer Is the Bottleneck: Why Context Engineering Demands a Team

Context engineering — the practice of deliberately designing and delivering the right knowledge to AI agents before they ever write a line — is quickly becoming the defining skill of high-performing development teams. And yet almost every team is doing it wrong, because they’re doing it alone.

For decades, the image of a great developer has been someone who can sit alone, headphones on, and bend a codebase to their will. We’ve celebrated the 10x engineer, the solo architect, the “one throat to choke.” That model worked fine when humans were doing the coding.

It’s starting to fail now that agents are.

How We Work Now and Why It’s Broken

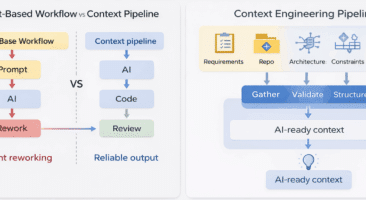

When a developer today sits down to use an AI coding agent, the workflow looks something like this: they open their tool of choice, type out a prompt, and hope the agent understands enough about their system to produce something useful. Sometimes it does. Mostly, it doesn’t — not without significant back-and-forth, corrections, and re-explanation.

This isn’t a prompt engineering problem. You can craft the most elegant prompt in the world, and the agent will still fail if it doesn’t know why your team chose a particular architecture, what that legacy service actually does, or how your authentication layer is supposed to behave across twelve dependent modules.

The research is unambiguous on this: 82% of failed agent tasks trace back to inadequate upfront planning. Developers spend 30–45% of their time providing context that agents should already have. Agent-generated code requires significant revision in 60–70% of cases — not because the model is bad, but because nobody planned for the agent.

The solo developer model, applied to AI-assisted work, is the bottleneck.

The Planning Gap Nobody Talks About

Here’s the uncomfortable truth about the current state of AI coding: the tools have gotten remarkable at execution and nearly nobody has focused on what happens before execution begins.

Senior developers have institutional knowledge locked inside their heads — architectural decisions, anti-patterns the team has learned to avoid, the reason a particular service is structured the way it is. Junior developers don’t have it. Agents definitely don’t have it. And in the current workflow, that knowledge never gets to either of them in a structured, usable form.

When a developer works with an agent solo, they’re essentially doing an impromptu, unstructured knowledge transfer every single session. They re-explain the codebase. They re-describe the constraints. They patch together context in real time and hope it holds long enough to get a useful output.

Then the session ends, and the agent forgets everything.

The next developer on the team, or even the same developer tomorrow, starts from zero.

This is context fragmentation. And it’s costing development teams an estimated 7+ hours per week per developer.

What Context Engineering Actually Is

Prompt engineering asks: How do I ask the AI to do this correctly?

Context engineering asks: What does the AI need to know to succeed?

The distinction matters enormously. Context engineering is the discipline of designing, managing, and delivering comprehensive context to AI systems before they are ever asked to act. It’s the difference between throwing a contractor into a building mid-construction and giving them a complete set of architectural blueprints first.

Done well, context engineering improves agent accuracy from 23% to 61%. It reduces iteration cycles by 40–60%. It makes institutional knowledge durable across sessions, across team members, and across time.

Done solo, it’s a band-aid. A single developer managing their own context library helps themselves. It does nothing for the team, and it dies the day they move to a new project.

Why This Has to Be a Team Sport

Here’s the key insight that most organizations are missing: context engineering, done right, is not an individual activity. It’s a collaborative planning discipline.

Think about what good context for an AI agent actually requires:

- Architectural knowledge — why the system is designed the way it is, what decisions were deliberately made, what patterns the team has agreed to follow

- Domain knowledge — what the business rules are, where the edge cases live, what constraints the product team has established

- Temporal knowledge — what’s being migrated away from, what’s being built toward, what’s actively in flight right now

- Team conventions — how code is reviewed, what quality standards apply, how conflicts between components are resolved

No single developer has all of this. It lives across the team — in the minds of senior engineers, in Slack threads, in old pull request comments, in architecture review documents, in conversations that happened six months ago in a conference room.

Getting this context into a form that AI agents can actually use requires the team to come together and build it together. It requires collaborative planning sessions where the right people surface the right knowledge, structure it, and make it available — not just to themselves, but to every agent every team member runs.

What Collaborative Planning Sessions Look Like

The shift from solo to team-based context engineering isn’t abstract. It’s a concrete change in workflow.

Instead of a developer opening their agent tool and starting to type, the team establishes a shared planning session before significant work begins. In that session:

- Senior developers articulate architectural constraints and decisions in structured form

- Product and engineering align on scope, dependencies, and edge cases

- The team builds a shared context plan that can be exported directly to AI coding agents

- Everyone agrees on what “done” looks like, so the agent’s output can be verified against something concrete

The output of that session isn’t just a Jira ticket or a Confluence doc that nobody reads. It’s a structured, agent-ready plan — a package of context that any agent, run by any team member, can consume to dramatically increase the accuracy of what it produces.

This is the planning layer that bookends agent execution. And it changes everything about how reliable AI-assisted development can be.

The Competitive Divide Forming Right Now

Organizations that figure this out early will have a significant advantage. Not because they have better agents — every team has access to the same models. But because their agents are working from better foundations.

Teams that invest in collaborative context engineering will see agents that produce usable code on the first or second attempt rather than the fifth. They’ll see institutional knowledge that survives team changes. They’ll see junior developers producing senior-quality work because the context the senior team built is available to every agent they run.

Teams that continue working solo — one developer, one agent, one improvised context dump per session — will continue to see the same 60–70% revision rates, the same wasted hours, and the same mounting frustration that AI tools aren’t living up to their promise.

The agents are ready. The question is whether the teams are.

Where to Start

If you’re an engineering leader, the most important thing you can do right now is stop treating AI agent adoption as an individual productivity problem and start treating it as a team coordination challenge.

That means:

- Establishing planning rituals before significant agent-assisted work begins

- Building shared context libraries that the whole team maintains and contributes to

- Making context a first-class artifact — reviewed, versioned, and updated alongside code

- Measuring what your agents actually know versus what they need to know, and closing that gap deliberately

The prompt engineering era taught us that how you ask matters. The context engineering era is teaching us that what the AI knows before you ask matters more.

And what the AI knows — at the team level, at scale, with durability — is something no single developer can build alone.

How Brunel Agent Solves This

At Loadsys, we built Brunel Agent specifically to close this gap.

Brunel is an AI project planning platform designed for the whole team — developers, project managers, and stakeholders — to collaboratively build structured plans before any coding agent ever writes a line of code. It’s the planning and verification layer that bookends agent execution.

Here’s how it works:

Plan — Your team comes together in a shared Brunel workspace to capture architectural context, business requirements, acceptance criteria, dependencies, and constraints. Every role contributes what they know. Nothing gets lost in a Slack thread.

Export — Brunel generates a structured plan document that any AI coding agent can consume directly. Whether your team uses Cursor, Claude Code, GitHub Copilot, or any other tool, the agent gets everything it needs to succeed on the first attempt.

Execute — Your developers hand the plan to their agent of choice. Brunel doesn’t generate code and doesn’t compete with your existing tools — it makes them dramatically more effective.

Verify — After the agent completes its work, Brunel inspects the codebase and compares what was built against the original plan, catching deviations, missed requirements, and architectural violations before they ever reach code review.

The result: first-attempt accuracy improves from 23% to 61%. Iteration cycles drop by 40–60%. And the institutional knowledge your team built in that planning session doesn’t disappear when the session ends — it persists, versioned alongside your code, available to every agent every team member runs.

Brunel Agent is currently available for early access. If your team is serious about getting real, consistent results from AI coding agents, we’d love to show you what planning-first development looks like.

Join the Brunel Agent waitlist →

The teams that win the AI-assisted development era won’t be the ones with the best individual prompters. They’ll be the ones who learned to plan together.